Rob Hirschel, Sojo University, Japan

Craig Yamamoto, Sojo University, Japan

Peter Lee, Sojo University, Japan

Hirschel, R., Yamamoto, C., & Lee, P. (2012). Video self-assessment for language learners. Studies in Self-Access Learning Journal, 3(3), 291-309.

Download paginated PDF version

Abstract

Students were video recorded performing similar tasks at both the outset of the academic year in April and towards the year-end in December. Student participants (N=123) viewed both videos in December and completed identical questionnaires with regard to both videos. The questionnaire sought to elicit students’ (1) satisfaction with their English ability, (2) interest in speaking English, (3) ability to interact in English, (4) enjoyment of communication in English, and (5) confidence in speaking English. Mean scores for all items were higher (all statistically significant) for the December videos. In a similar survey comparing students’ perceptions of improvement during their eight months of study, learners participating in the video treatment (N=143) reported higher scores of improvement than the control group (N=107) for all items (2, 4, and 5 achieving statistical significance). Initial results appear to indicate that student videos are correlated with a positive effect upon students’ interest in, enjoyment of, and confidence in speaking English, but not with perceptions of increased general English ability or ability to interact in English. The findings are applicable to teachers and advisors of individual learners, who wish to empower their students in realizing progress for language learning endeavors that can sometimes seem tenuous.

Keywords: self-assessment, motivation, awareness, video

In the field of self-access language learning, assessment has been found to be one of the “key challenges” due to the unconventional nature of self-access centers in providing unique programs for learners who individually decide what activities to pursue, and when, how, and for what duration to perform them (Reinders & Lázaro, 2007). Compared with the more traditional classroom whereby students are often presented with identical resources, at identical times, with identical instructions and identical deadlines, constructing a fair assessment can be a very daunting task. In their investigation of 46 self-access centers in five countries, Reinders and Lázaro (2007) found that 24 conducted no assessment whatsoever, whereas the remaining 22 centers employed a variety of assessment measures, with self-assessment comprising 82%. The self-assessments took a number of forms including questionnaires, learning diaries, and assessment grids and portfolios such as the European Language Portfolio (ELP). In a chapter entitled Learner autonomy, self-assessment and language tests: Towards a new assessment culture, Little (2011) made a very compelling argument for self-assessment, but offered only one new instrument, the ELP. Other recent articles by scholars in the field either focused on assessment of “autonomy” (Benson, 2010) or, as in an article entitled Managing self-access language learning: Principles and practice, made no mention of “assessment” at all (Gardner & Miller, 2011). It was thus, with scant attention given to self-assessment in language learning, that the authors undertook the present research.

This pilot study aims to explore the possibility of using video assessment for teachers, learning advisors, and students to effectively monitor progress in language learning. For students, self-assessment is viewed as an invaluable way of involving students in the learning and evaluation process, enabling students to become more autonomous and self-directed learners, and giving students the skills to make the most of language learning opportunities (Little, 2005; Ross, 2006). For teachers, learning advisors, and administrators, the videos can similarly be used as tangible products for demonstrating gains.

Video-Stimulated Recall (VSR) has long been used in teacher training and development (Calderhead, 1981; Reitano, 2006). Though a 100% purely objective method of data collection does not exist (Pirie (1996) outlined numerous potential biases), VSR has advantages in being able to record at least some aspects of classroom performance and enables the viewer(s) to revisit this data and reflect upon performance, decisions taken, and emotions felt. Reitano (2006) highlighted some of the limitations of VSR, including embarrassment, a fixation on one’s physical appearance, and a firm mindset whereby objective observations are challenging. From the discipline of business management, there have been concerns as to the accuracy of self-assessment, with research suggesting self-assessment is more strongly tied to affective factors than to cognitive ones (Sitzmann, Ely, Brown, & Bauer, 2010). For language learners, however, it is precisely these affective factors that are among the most important (Arnold, 2009), perhaps even more so for the independent language learner (Hurd, 2008). Affective factors aside, Ross (2006) found that through proper training, student self-assessment can be both valid and reliable, and can contribute to greater learning outcomes. Reitano (2006) concludes that VSR “has been shown to be a most effective tool for teachers to reflect on their knowledge in action and to promote professional growth” (p. 10). The authors of the current study believed that perhaps the very same tool of video reflection could be used for language learners as well.

Literature Review

MacIntyre, Clément, Dörnyei, and Noels (1998) wrote that the “primary goal of language instruction” (p. 545) is to facilitate communicative use of the second language (L2). It is not a stretch to see that communicative use of an L2 necessarily involves a certain amount of autonomy. Littlewood (1999) explains:

If we define autonomy in educational terms as involving students’ capacity to use their learning independently of teachers, then autonomy would appear to be an incontrovertible goal for learners everywhere, since it is obvious that no students, anywhere, will have their teachers to accompany them throughout life. (p. 73)

An integral part of autonomous learning, regardless of how one may define the term (for definitions see Little, 2007; Littlewood, 1999), is some measure of autonomous assessment or self-assessment. Little (2005) explains that a learner-centered curriculum is incomplete without self-assessment and shared responsibility. Chen (2008) describes the merits of self-assessment in assisting “students to develop knowledge of standards of good work” (p. 238), identifying performance in relation to these standards, and making appropriate choices for further study. Both Little (2005) and Chen emphasize the role of self-assessment in enabling learners to reflect on their strengths and challenges, and consequently develop as informed learners. Chen (2008) specifies the opportunities for growth in the phrase “learning to assess and assessing to learn” (p. 254), whereas Little (2005) describes the process of self-assessment as enabling “learners to turn occasions of target language use into opportunities for further explicit language learning” (p. 322).

In the field of teacher training and development, there have been numerous studies investigating the practice of VSR. Reitano (2006) notes five advantages of VSR that may also apply to the current study: (1) allowing for the reliving of specific episodes in context, (2) enabling both self-reflection and input from others, (3) giving adequate time for reflection, (4) putting the subject in control, and (5) enabling subjects to make explicit what may have previously been understood only implicitly. Though these advantages of VSR are expected to cross over from the realm of teacher training to that of language learning, the authors of the current study were unable to find any research literature focusing upon video use for student self-assessment and learning.

Research Questions

The following research questions were thus proposed:

1. How do students perceive their progress in spoken English after 8 months of formal study?

(Progress, in this study, is understood to encompass not only communicative ability, but also the important affective considerations of interest, enjoyment, and confidence.)

2. How do the above perceptions compare with those of students who have not been video recorded?

Methodology

Participants

The participants were drawn from 11 intact classes of first year students at a Japanese university of sciences and engineering. All participants had two 90-minute periods of English per week for two 15-week semesters. None were English language majors. All participants undertook the same English curriculum taught by the three instructor-researchers.

Two survey measurements were completed, details of which are explained later in this section. For the first survey instrument, a paired samples t-test was used to analyze the two iterations. After removing participants who had been absent for one or more of the video recordings, or for one or more of the video-viewing and questionnaire completion sessions, the number of participants stood at 107.

For the second survey measurement, analyzed via an independent samples t-test, there were 143 participants in the experimental group and 107 participants in the control group (N=250).

Conditions

The participants in the video treatment group were video-recorded twice during the academic year: once at the outset in April and once towards the end of the year in December. The time of recording was chosen to provide students and their teachers with viewable data from approximately the beginning and end of their first-year university English studies. The aim of the above research questions was to assess students’ progress in spoken English (understood to be communicative). The participants were therefore asked to record interactions in pairs such that elements of conversation, including interactive ability and confidence in communicating with a partner, could be evaluated. The April video recording entailed pairs of students asking each other about their identity pages (see Appendix A). The December video recording involved pairs of students speaking about common topics (see Appendix B). Following completion of the second video recording in December, the students watched the two videos in successive classes, immediately completing the same questionnaire after each video, comprising the questions indicated in the next section.

In a third class, the video treatment group participants completed an additional questionnaire after watching the two videos together. The control group responded to the same questionnaire (instrument two) in the absence of any videos. Control group participants experienced the same classes, with the same curriculum taught by the same instructors. The sole difference was the absence of video activities.

Survey Instrument One

The survey instrument, completed twice by the treatment group (N=123), comprised five items translated into Japanese and back-translated for accuracy. The items were chosen and constructed in an effort to assess a robust definition of progress in spoken English comprising both elements of production (general English ability, ability to interact in English) and elements of affect (interest, enjoyment, confidence). Each item was followed by a space to optionally record any comments. The survey instrument was administered online, and the respondents could answer in Japanese or English. The items are shown in Figure 1.

Survey Instrument Two

The survey instrument completed by both the treatment and control groups (N=250) comprised five items translated into Japanese and back-translated to ensure accuracy. The items were constructed to enable comparison between the treatment group, which had recorded, watched, and evaluated their videos; and the control group, which had no video activity. The items are shown in Figure 2.

Results

The descriptive statistics for survey instrument one are presented in Table 1. The average ratings of the participants (N=123) were all slightly higher for the survey administered following viewing of the December video as compared with the survey following viewing of the April video. A paired samples t-test, with values reported in Table 2, indicates that all December gains were statistically significant at the .05 level.

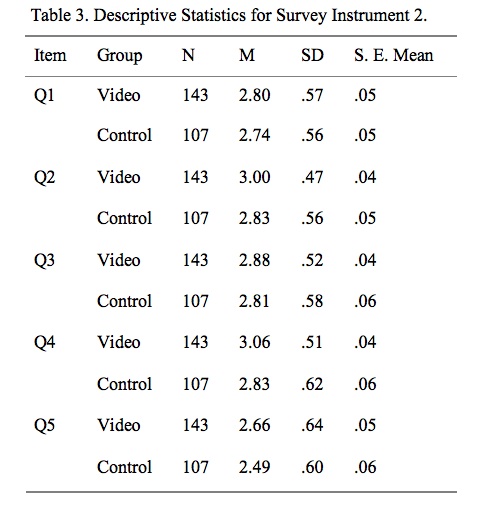

The descriptive statistics for survey instrument two are presented in Table 3. The experimental video group scored marginally higher on all items regarding improvement. An independent samples t-test, with values reported in Table 4, was performed. Levene’s Test for Equality of Variances was conducted and the suitable values were chosen. The statistical procedure indicated that items 2, 4, and 5 incurred means from the two groups that were statistically significant at the .05 level. These results demonstrate that learners who participated in the video treatment rated themselves higher, after eight months of study, on measures of interest, enjoyment, and confidence, but not on measures of ability.

Qualitative Data

For the purposes of triangulation, participant comments were solicited in the two administrations of survey instrument one. Participants were encouraged to make comments in either English or Japanese, with a professional translator performing translations of the Japanese comments. The comments section was both optional and open ended. Thus, while definitive conclusions cannot be made about the comments of students who elected to respond, the comments can give a broader indication of student perspectives than by using the Likert-scale data alone.

The comments for each of the five questions on iterations one and two of survey instrument one were first independently coded by the three researchers, discussed, and finally coded together by the team. Comments for items 2 (interest in speaking English) and 4 (enjoy communicating in English) generally fell along the spectrum of interest in the subject matter (clearly evident, clearly lacking, or not explicitly mentioned). Statements such as “I’m not sure that other people can see, but I’m very interested” were categorized as the student demonstrating an interest in English language learning. Conversely, statements such as “I don’t like English very much” were clearly indicative of students who lacked interest. Given the open-ended nature of the comments, many, such as “I thought English is difficult” could not be categorized on a scale of interest. One commonly uncategorizable type of comment had to do with smiling. In the two iterations of the survey, there were twenty instances of smile, smily, or smiling recorded, only two of which were coded for participant interest: “When I speak, I smile and look interested” and “I looked that I was having fun, because I was smiling”. Other comments such as “I was smiling”, “I’m talking with a smile”, and “I could talk with smile” would tend to indicate interest in the subject matter, but without greater context could not be coded as such. Smiling, particularly in the Japanese context, could be construed as a sign of embarrassment or discomfiture (Andrade & Williams, 2009). The three comments, “I’m smiling foolishly”, “I’m smiling bitterly”, and “I don’t have smile” might indicate negative feelings, but again, without greater context could not be coded. Comments for items 1 (satisfaction with English ability), 3 (ability to interact in English), and 5 (confidence in speaking English) generally aligned along two different dimensions: a) interest in the subject matter, and b) satisfaction with English ability. Comments that did not reference interest or satisfaction with English ability were left uncoded.

Table 5 displays the results of the qualitative portion of this study. Bearing in mind that comments were optional and that their coding can be problematic based on limited context, the results are nonetheless promising. In all instances, satisfaction and interest were registered in higher percentages for the December video iteration than for the April iteration, and dissatisfaction and disinterest in equal or lower percentages for the December iteration. Particularly noteworthy is the large percentage of student responses for the December video indicating interest for items 2 and 4.

Note: + and – refer, respectively, to presence and absence of the coding dimension. unc indicates responses that were uncategorizable with regard to the coding dimension.

Discussion

The first research question asked how students perceived their progress in spoken English after eight months of formal study. The participants from the video treatment group reported higher ratings for the December video on all five items of survey instrument one. These statistically significant findings appear to demonstrate that the experimental group believed they were making progress. These results are promising. There are, however, a number of limitations to these findings, discussed in the next section, for which caution is advised in interpreting the results.

The second research questionasked how the perceptions of the students in the video treatment group compared with similar students in the control group. These findings are a little more robust in that there is a control group with which to compare the video treatment group findings. On survey instrument two, eliciting perceptions of improvement, the experimental group ranked themselves higher on all five measures (three of which were statistically significant). Items 2, 4, and 5 (eliciting perceptions of interest in speaking English, enjoyment of English communication, and confidence in speaking English, respectively) all incurred small, but statistically significant differences when compared against the control group. This finding leads the researchers to believe that the video treatment has had some positive effects on how learners rated their interest, enjoyment, and confidence in communicating in English.

Conversely, for items 1 and 3 (both eliciting perceptions of ability), no statistical difference was found. Thus, while the video treatment appears to have led to higher self-ratings for affective measures, there appears to be no difference with regard to measures of ability.

The qualitative results tended to validate the results from the survey instruments, particularly for items 2 and 4 (evaluating interest and enjoyment, respectively). For the December iteration of survey one, responses such as “I looked [like] I was enjoying it”, “I can’t speak English well, but I like to speak”, and “I have more interest than the last time” were common for item 2. For item 4, typical responses included “I enjoyed the conversation”, “I think that speaking English is difficult but fun”, and “I was nervous but excited to do it”. The responses for item 5 with regard to confidence were a little more circumspect with only eleven participants making statements such as “I think that I am better than I was”, “I could talk with [confidence] looking at my partner”, and “I could talk without hesitation”. Though the participants appeared relatively reluctant to express satisfaction with their current ability, many of the students did clearly express interest in studying English throughout their responses to the five survey items. Often-recorded responses such as “I’m interested in English but I can’t really speak English” and “I want to improve my English and gain confidence” underscore this point.

Limitations

As with any research into language learner perceptions, there are a number of limitations inherent. First, there is the concern that students may not be particularly accurate assessors of their own progress. Sitzmann et al. (2010) have called into question whether self-assessments are in fact more of an affective judgment than a cognitive one. Given that the statistically significant results in this study were indeed for affective factors rather than those concerning ability, perhaps this limitation is not as worrying. In future studies, it may be useful to provide thorough training in self-assessment such that students can achieve greater validity and reliability in their own assessments (Ross, 2006).

A second concern is that all students were clearly aware of which video was taken in April and which was taken in December. There thus exists the possibility whereby student expectations led them to assume improvement when, in fact, none may have existed. Having invested eight months of study into their English, it may be difficult for learners not to give themselves higher scores on the second iteration of survey one. On the contrary, however, there is also the possibility that non-English major students who have been required to take English might have been disaffected with the subject matter and may have given themselves equal or lower scores in the second iteration of survey one, regardless of any possible improvement. As there is little possibility of controlling for students’ knowledge of which video was taken when, the best way of ensuring valid and reliable self-assessments is, again, through comprehensive self-assessment training.

A third limitation relates to the timing of both iterations of survey one. The recorded videos from April and December were both viewed and evaluated by students in December in successive classes. The proximity of the viewings and the elapsed time from the April recording may have affected students’ evaluations. The authors’ follow-up research will have students view and evaluate their performances shortly after the recordings.

There are finally practical matters to be considered. The April recordings were made in the classroom (multiple pairs at once) with no external microphone and the audio quality was often problematic. The December recordings were instead made in auxiliary rooms and were much more audible. The next study will see both sets of recordings made in auxiliary rooms for maximum clarity.

Conclusion

The above limitations notwithstanding, this study has gleaned some important and tangible results. Taken together, the qualitative and quantitative data point to a pattern whereby, over the eight month course of study, students appeared to be developing a greater interest in and enjoyment of communicating in English. Slightly increased levels of confidence were also apparent. Particularly noteworthy was that participants in the video treatment group were able to perceive gains in interest, enjoyment, and confidence that the control group participants did not.

What has not been sufficiently demonstrated is a perception of gain in satisfaction with general English ability or in the belief that the student has an increased ability to interact in English. The results for video treatment group participants were not statistically different from those of the control group for those measures. Frequent survey responses such as “I can’t communicate well yet” suggest that, although students may not appreciate gains in their ability, they may have made gains in terms of their interest in the subject matter.

There are a number of potential explanations for students being unable to perceive gains in actual ability. Perhaps the most obvious (and the most disconcerting for teachers) is that there was no gain. The authors believe, however, that gains in communicative ability in a short term EFL setting are rather difficult to pinpoint, especially in the absence of any quantifiable measurement. Students, particularly in the absence of training, may be unable to objectively measure their own gains (Sitzmann et al., 2010). A child, by way of analogy, may have experienced vertical growth in an eight-month period. With no chart to assist her, however, that growth may be imperceptible, particularly if the child’s friends are growing as well.

What this study has clearly demonstrated is that intermittent video recordings can assist students in identifying gains of interest in, enjoyment of, and confidence with using English. Particularly in the absence of other concrete measures of demonstrating gain, these video recordings may better enable learners to realize the often elusive progress they are making in their language studies. This progress, even if it is more affective than cognitive, can provide a substantial boost in motivation for students.

Future studies should address the limitations described in the previous section, including training students to be competent raters of their own performance, viewing and evaluating the videos shortly after recording, and considering practical matters such as recording quality. Further research should also seek to identify students’ perspectives on the self-assessment training, video recording and viewing, and evaluation process in order to determine whether or not students believe these activities to be valuable. Researchers may want to consider using qualitative data collection methods such as interviews and focus groups in order to provide more contextual information for better coding and analyzing of the data. Finally, it would be interesting to see research that compares students’ self-evaluations on measures of ability against those of qualified and independent instructors. The authors of this pilot study are currently pursuing a revised replication study in order to tackle some of these challenges.

Notes on the contributors

Rob Hirschel is a lecturer at the Sojo International Learning Center (SILC) of Sojo University in Kumamoto, Japan. His research interests include assessment, affective factors in the language classroom, error correction, and CALL.

Craig Yamamoto is currently a lecturer at Sojo University in Kumamoto, Japan. He has been a manager, teacher and trainer for teachers in EFL/ESL since 1996 at the primary, secondary, and post-secondary levels. His research interests include assessment, training, and learner motivation.

Peter Lee is currently a lecturer at Sojo University in Kumamoto, Japan. He has been teaching EFL/ESL for more than 10 years at the primary, secondary, and post-secondary levels. His research interests include evaluation, listening, and CALL.

References

Andrade, M., & Williams, K. (2009). Foreign language learning anxiety in Japanese EFL university classes: Physical, emotional, expressive, and verbal reactions. Sophia Junior College Faculty Journal, 29, 1-24. Retrieved from http://www.jrc.sophia.ac.jp/courses/pdf/ver2901.pdf

Arnold, J. (2009). Affect in L2 learning and teaching. ELIA, 9, 145-151. Retrieved from http://institucional.us.es/revistas/elia/9/8.%20Arnold.pdf

Benson, P. (2010). Measuring autonomy: Should we put our ability to the test? In A. Paran & L. Sercu (Eds.), Testing the untestable in language education (pp. 77-97). Bristol, UK: Multilingual Matters.

Calderhead, J. (1981). Stimulated recall: A method for research on teaching. British Journal of Educational Psychology, 51, 211-217. doi:10.1111/j.2044-8279.1981.tb02474.x

Chen, Y.-M. (2008). Learning to self-assess oral performance in English: A longitudinal case study. Language Teaching Research, 12, 235-262. doi:10.1177/1362168807086293

Gardner, D., & Miller, L. (2011). Managing self-access language learning: Principles and practice. System, 39, 78-89. doi:10.1016/j.system.2011.01.010

Hurd, S. (2008). Affect and strategy use in independent learning. In S. Hurd & T. Lewis (Eds.), Language learning strategies in independent settings (pp. 218-236). Bristol, UK: Multilingual Matters. Retrieved from http://oro.open.ac.uk/10049/1/Affect%26StrategyUseinIndependentLearning.pdf

Little, D. (2005). The Common European Framework and the European Language Portfolio: Involving learners and their judgements in the assessment process. Language Testing, 22, 321-336. doi:10.1191/0265532205lt311oa

Little, D. (2007). Language learner autonomy: Some fundamental considerations revisited. Innovation in Language Learning and Teaching, 1, 14-29. doi:10.2167/illt040.0

Little, D. (2011). Learner autonomy, self-assessment and language tests: Towards a new assessment culture. In B. Morrison (Ed.), Independent language learning: Building on experience, seeking new perspectives (pp. 25-39). Hong Kong, China: Hong Kong University Press. doi:10.5790/hongkong/9789888083640.003.0003

Littlewood, W. (1999). Defining and developing autonomy in East Asian contexts. Applied Linguistics, 20, 71-94. doi:10.1093/applin/20.1.71

MacIntyre, P., Clément, R., Dörnyei, Z., & Noels, K. (1998). Conceptualizing willingness to communicate in a L2: A situational model of L2 confidence and affiliation. The Modern Language Journal, 82, 545-562. doi:10.1111/j.1540-4781.1998.tb05543.x

Pirie, S. (1996, October). Classroom video-recording: When, why and how does it offer a valuable data source for qualitative research? Paper presented at the Annual Meeting of the North American Chapter of the International Group for Psychology of Mathematics Education Panama City, Florida.

Reinders, H., & Lázaro, N. (2007). Current approaches to assessment in self-access language learning. TESL-EJ, 11, 1-13. Retrieved from http://www.cc.kyoto-su.ac.jp/information/tesl-ej/ej43/a2.pdf

Reitano, P. (2006, December). The value of video stimulated recall in reflective teaching practices. Paper presented at the Social Science Methodology Conference, University of Sydney, Australia.

Ross, J. A. (2006). The reliability, validity, and utility of self-assessment. Practical Assessment Research & Evaluation, 11, 1-13. Retrieved from https://exams.library.utoronto.ca/handle/1807/30005

Sitzmann, T., Ely, K., Brown, K. G., & Bauer, K. N. (2010). Self-assessment of knowledge: A cognitive learning or affective measure? Academy of Management Learning & Education, 9, 169-191. doi:10.5465/AMLE.2010.51428542

Appendices

(See PDF version of the article)