Atsumi Yamaguchi, Meijo University, Nagoya, Japan

Erin Okamoto, Woosong University, Daejeon, South Korea

Neil Curry, Kanda University of International Studies, Chiba, Japan

Katsuyuki Konno, Ryukoku University, Shiga, Japan

Yamaguchi, A., Okamoto, E., Curry, N., & Konno, K. (2019). Adapting a checklist of materials evaluation for a self-access center in Japan. Studies in Self-Access Learning Journal, 10(4), 339-355. https://doi.org/10.37237/100403

Download paginated PDF version

Abstract

Materials evaluation calls for a systematic and principled approach. In reality, however, materials evaluation in language-learning self-access centers (SACs) is significantly lacking in good models. This paper reports on a project undertaken by SAC facilitators in Japan to investigate whether/how a pre-evaluation checklist developed a decade ago at a SAC in New Zealand (c.f. Reinders & Lewis, 2006) could be adapted to their target context. A mixed methods approach was employed where data was obtained via a Likert-scale questionnaire and follow-up interviews. The survey was adapted from Reinders and Lewis and enrolled 103 Japanese university students. The interviews were conducted to eight randomly selected survey respondents. Results show that the modeled checklist can be used as a basis with modifications allowing for contextual differences. The results suggest that Japanese learners of English value visually stimulating materials and require more guided support for them to effectively use materials beyond the classroom. The article provides an adapted checklist designed for Japanese learners of English as well as suggestions for future research.

Keywords: materials evaluation, self-access center, Japanese EFL learners, mixed method

Self-access centers (SACs) have long taken an important role in English language education, particularly in support of learner autonomy (Benson, 2011; Kato & Mynard, 2016). Established SACs, according to Gardner and Miller (1999), typically comprise of a stock of physical learning materials, as well as human resources (teachers/advisors/other learners), management systems, and other support. Whereas such elements are indispensable for SAC users, the quality of a SAC’s resources may have a significant impact on learning outcomes (Reinders & Lewis, 2005). Reinders and Lewis (2005) also affirm that learning materials take up a substantial portion of institutional SAC budgets, and take Gardner’s (1999) suggestion that resources be systematically evaluated and replaced for their ability (or inability) to support learners. Building on the accounts of other established SACs, this study investigates our target learners’ needs and wants for learning materials, aiming to eventually achieve a more principled, informed materials evaluation. A discussion of the literature in materials evaluation is needed to begin the process.

Materials Evaluation in Classrooms and its Application in Self-Access Contexts

Materials evaluation has been mainly discussed for the classroom context. Defined as “a procedure that involves measuring the value (or potential value) of a set of learning materials” (Tomlinson, 2013a, p. 21), Tomlinson (2013b) claims that initiating materials evaluation is likely to be “ad hoc” because teachers ordinarily tend to choose new coursebooks by browsing in bookstores or catalogues. However, teachers’ (aka evaluators’) principles need to be clear so that their evaluation can be systematic and reliable (p. 31), so that a more objective and principled approach can take place in materials evaluation. This should be applied to materials evaluation in SACs.

Taking this approach, evaluators need to consider four dimensions systematically (Mishan & Timmis, 2015): 1) What is evaluated; 2) When the evaluation takes place; 3) How the evaluation is implemented; and 4) Who carries out the evaluation. Comparisons between classroom and SAC settings regarding these dimensions are shown in Table 1.

Table 1

A Four-Dimension Comparison Between Classroom and SAC Settings

Like classroom teachers, SAC facilitators need to evaluate various types of materials for their strengths and weaknesses, as well as assess the timing of materials evaluation. SACs tend to accommodate various types of materials for the purpose of catering to a large body of diverse users. In relation to timing in the classroom context, Mishan and Timmis (2015) note above that pre-evaluation seems to be the most common because it is easier to undertake, but can be detrimental because it is likely to rely heavily on teachers’ impressions. Tomlinson (2013b) explains that whilst-use evaluation tends to be more “objective and reliable” than making predictions in pre-use evaluation, as teachers measure the value of materials while they are being used, or obtain information by observing materials being used (p. 32). However, this sort of observation alone will not inform us of what is actually taking place in learners’ thought processes. Thus, post-use evaluation may be more valuable because “it can measure the actual effects of the materials on the users” (Tomlinson, 2013b, p. 33). However, it can be time-consuming to carry out.

Through this study, we have surmised that pre-evaluation is the most suitable, because at this time we aim to develop a practical tool that could be used for a large number of materials for non-exclusive target learners. Whilst- and post-evaluations are certain to be developed after a pre-evaluation instrument has been created, however that is beyond the scope of this study. With respect to how evaluation takes place, few empirical examples were found for SAC materials evaluation, while multiple approaches are proposed and implemented in the classroom context. The most common practice among them seems to be developing evaluation criteria. Mishan and Timmis (2015) suggest that criteria that make evaluators’ values explicit can be generated, and that subsequent evaluation instruments can be developed. According to them, the criteria are often associated with a “checklist method” (p. 61), which involves developing a checklist that exclusively associates with specific criteria. This requires a long and complex process, not the “easy and quick” impression that the term ‘checklist’ may convey. Many such models (c.f. Gardner and Miller, 1999; Jones,1993; Lockwood, 1998; Sheerin, 1989), perhaps lacking in rigorous development processes, appear to have critical limitations and need further development because they are too time-consuming, too general, too lengthy, or indicate low reliability (Reinders & Lewis, 2006). Amongst the checklists in the available literature, we found a pre-evaluation checklist developed by Reinders and Lewis (2006) to be the most rigorous and reliable due to its systematic developmental process. The next section describes the developmental process and how it was modified for the Japanese SAC context.

A Checklist Developed at a University English SAC in New Zealand

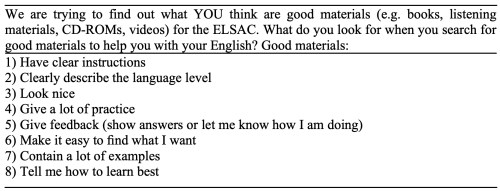

Reinders and Lewis (2006) developed a pre-evaluation tool to evaluate materials in a university English language SAC for ESL learners (called ELSAC) in New Zealand. Its context is similar to ours, including the target language, age, and services provided (e.g. language advisory sessions, workshops, number of learning resources), while linguistic as well as cultural background and the involvement in the target language use may be significantly different. They reviewed and evaluated six checklists found in literature and adapted five features into their newly-made checklist. Prior to the project, they asked SAC facilitators about good features of SAC materials to later find that asking learners directly was a better approach (Reinders & Cotteral, 2001). Then, they administered a four-point Likert scale questionnaire developed from a former project (Reinders and Lewis, 2005), to twenty randomly selected ELSAC users. Table 2 below shows their survey items.

Table 2

Reinders and Lewis’ ‘Good Materials’ Questionnaire (2006, p. 275)

They made sure to keep the items short and simple for making it practical and less time-consuming for checklist users. Open-ended questions were avoided, as they are ineffective when random learning materials are targeted rather than some particular materials. The following results were obtained (shown in Table 3.):

Table 3

The Percentages of ELSAC Learners’ Responses to Each Question Item

Note. N = 20. All numbers in the table represent percentages. The sum of percentages in a row may not turn out to be 100% since these percentages were computed by rounding off. Rating average was calculated by dividing the sum of the total number of the following ratings in each scale in a row by 20: 1: very important, 2: important, 3: a little bit important, 4: not so important. A lower rating average represents the learners’ perceptions of higher importance.

Note. N = 20. All numbers in the table represent percentages. The sum of percentages in a row may not turn out to be 100% since these percentages were computed by rounding off. Rating average was calculated by dividing the sum of the total number of the following ratings in each scale in a row by 20: 1: very important, 2: important, 3: a little bit important, 4: not so important. A lower rating average represents the learners’ perceptions of higher importance.

(Adapted from Reinders and Lewis, 2006, p. 275)

Because the rating average for item 3 (“Look nice”) was relatively higher than other items, they concluded that their target learners do not consider materials’ ‘looking nice’ important. Thus, they decided to eliminate the category of visual presentation of learning materials from their checklist. The following (Table 4) is the refined checklist they developed after repeated discussions, trials, and inter-rater reliability was checked. They made sure to state the specific features to keep them generic to all proficiency levels, and to avoid points that were too subjective or difficult to answer quickly.

Table 4

The Checklist Developed by Reinders and Lewis (2006)

Whereas we acknowledge the fact that the checklist is dated and SAC resources may have changed and evolved since then, this is the latest and most rigorous checklist found in the literature, especially because it appears that no follow-up studies have been published elsewhere. The next section describes the context of the present study.

The Present Study

This present study finds its context in a university-affiliated SAC, which aims to foster learners’ English language acquisition, in Chiba, Japan. Established in 2001, the Self-Access Learning Centre (SALC) incorporates a range of English language learning materials and services, including language advisory sessions, speaking practice and writing support, and student-led learning communities. At the time of this study in 2015, materials and resources numbered approximately 8,000 items and were comprised of didactic materials, authentic materials, in-house materials, a small selection of ‘self-access’ labeled materials, and EFL materials specifically designed for Japanese L1 learners. The selection and provision of materials are mainly facilitated by Learning Advisors (LAs), who assist in the location of suitable materials and resources and devise general and materials-specific learning strategies for users. Administrative Managers (AMs) are also involved in purchasing and maintenance.

For some time, LAs and AMs operated under a previously established set of policies that mostly relied on facilitators’ ad-hoc perceptions and/or the physical condition of materials. This inspired a team of four LAs to develop a more objective criterion-based checklist. As described earlier, developing a rigorous and reliable checklist requires a lengthy process. Therefore, we decided to examine the adaptability of Reinders and Lewis’ checklist because it seems to hold the most systematically developed criteria. However, as discussed earlier, our target learners possess similarities but also have different cultural backgrounds and experiences with English learning and use compared to ELSAC learners. Given this, we planned to first administer Reinders and Lewis’ ‘Good Materials’ Questionnaire (Table 2.) to discover whether our target learners hold similar perceptions on good materials to their target learners. The research questions are shown below:

- Can Reinders and Lewis’ checklist be used as a foundation for our checklist?

- Which features from the modeled checklist are subject to modification?

- How can the identified features be reformulated to be more suitable for SALC users?

Method

Although the Reinders and Lewis checklist was well developed, we still found a couple of improvements that could be made to their data collection methods. Whilst they administered the survey to 20 students, a larger number of participants should be enrolled to represent a larger population of SAC users. In addition, while they did not obtain qualitative data from the survey participants, we thought that such data would provide useful information in the process of developing a checklist. We decided to conduct follow-up interviews as a means of data collection. As a result, we employed a mixed-methods approach (Creswell, 2015), within which explanatory sequential design was selected. Explanatory sequential design aims for qualitative data to add further information to the findings from quantitative analysis. We administered Reinders and Lewis’ questionnaire, translated into Japanese, to explore our target learners’ preferences for learning materials in the SALC. At that point, we decided to use the survey without modification because we aimed to examine the distributions of SALC users’ responses as opposed to ELSAC users in order to identify which features of the checklist, if any, we should further qualitatively investigate for possible changes. It consisted of six statements to which the respondents were asked to indicate their degree of agreement on a 4-point Likert scale, 1 being “very important”, 2 for “important”, 3 for “a little bit important”, and 4 for “not so important”. The survey was administered via SurveyMonkey campus-wide to all enrolled students at the university in Chiba, Japan, to which the SALC belongs. The information about the survey was posted on a university portal site open to all students. A total of 103 learners responded to the questionnaire. After data was gathered, the number of respondents on each Likert point was counted. Then, cross tabulations were performed between corresponding items in the present study and Reinders and Lewis (2006) to see if there were differences in tendencies between these two studies with the aid of a non-parametric test to statistically examine these differences. Non-parametric statistical tests allow researchers to do statistical analysis with data not satisfying parametric assumptions, such as normal distribution of the data and equal variances across the groups, and even with categorical data (e.g., Mizumoto, 2010). While the chi-square test can be a typical choice for cross tabulations performed in the present study, it is not always robust especially when the sample size is small. With a small sample size and cells containing expected numbers less than 5, it is highly recommended to calculate the p value with Fischer’s exact test. Since the number of participants in one of the groups was comprised of a small number, and the number of respondents was zero in some cells (e.g., for Items 6 and 7, no SALC students chose “Not so important”), we used Fischer’s exact test to obtain more precise p values.

Follow-up semi-structured interviews were conducted with eight randomly sampled respondents. The interviewers mainly asked about the features that marked significantly distinct distributions between the two target parties. The interviews aimed to obtain survey respondents’ deeper insights. The interview questions are shown in the Appendix. As all students were foreign language-majors, the interview was conducted and transcribed in English. The data were transcribed and analyzed thematically.

Findings

Survey results

Table 5 shows the summary of percentages of the SALC learners’ responses on each scale of all eight items. Rating averages, which represent overall tendencies of the responses to each item, revealed that most of the SALC learners who participated in the survey considered almost all items to be very important or important (showing rating average below 2.00), except for Item 3. A closer look at percentages of each item provided further support for this tendency. In all seven items, more than 70 percent of the responses indicated “Very important” or “Important.” On the other hand, in Item 3, the responses were scattered, and only about half of the responses indicated “Very important” or “Important,” while the highest percentage was obtained for the scale “Not so important” among all of the eight items.

Table 5

The Percentages of SALC Learners’ Responses to Each Question Item

Note. N = 103. All numbers in the table represent percentages. The sum of percentages in a row may not turn out to be 100% since these percentages were computed by rounding off. Rating average was calculated by dividing the sum of the total number of the following ratings in each scale in a row by 20: 1: Very important, 2: Important, 3: A little bit important, 4: Not so important. A lower rating average represents the learners’ perceptions of higher importance

Note. N = 103. All numbers in the table represent percentages. The sum of percentages in a row may not turn out to be 100% since these percentages were computed by rounding off. Rating average was calculated by dividing the sum of the total number of the following ratings in each scale in a row by 20: 1: Very important, 2: Important, 3: A little bit important, 4: Not so important. A lower rating average represents the learners’ perceptions of higher importance

Interestingly, a comparison of tendencies in SALC learners’ responses to each item with those of ELSAC learners (Table 3) uncovered both similarities and differences in these two studies that took place in different SAC environments. A general tendency in the percentages of ELSAC learners suggested the similarity between these two studies: in most of the items, except for Item 3, more than 80 percent of ELSAC learners’ responses reflected “Very important” and “Important,” which was slightly higher than the proportion obtained from SALC learners.

At the same time, however, there were noticeable differences between these two studies. First, there were more learners who considered Item 3 important among SALC learners than ELSAC learners (Table 6). Considering the proportions of the responses obtained for “Very important” and “Important,” it can be said that about 50% of SALC learners responded that self-access materials should look nice. Conversely, more than two-thirds of learners in the ELSAC study thought that materials need not necessarily look nice.

Table 6

The Comparison of Students’ Responses to Q3 “Look nice”

The second difference was that there were more learners who considered Item 8 was unlikely to be important among SALC learners than with ELSAC learners (Table 7.). Almost all learners in Reinders and Lewis’ study thought that materials should definitely tell them how to learn best, while only 30% of SALC learners thought so. Moreover, about 25% of the learners tended to think that it is not important. This result may imply that Reinders and Lewis’ learners have more awareness in the process of “learning how to learn” than their SALC counterparts.

Table 7

The Comparison of Students’ Responses to Q8 “Tell me how to learn best”

In order to see if these differences between these two studies are statistically significant, cross tabulations were performed with the aid of Fischer’s exact tests. The result showed that among a total of eight items, only Item 3, p = .012, and Item 8, p = .003, demonstrated a significant difference. This suggests that in these two items, there was a specific pattern of distributions of the responses unique to each group of learners. It could be said that for Item 3, SALC learners are more likely to find greater value in the appearance of learning materials than ELSAC learners. Similarly, it could also be said that ELSAC learners tend to place greater emphasis on whether the material teaches how learners can learn than SALC learners.

According to the results of analysis above, it is concluded that two categories, “Look nice” (Item 3) and “Tell me how to learn best” (Item 8) need further investigation. The next section describes what follow-up interviews revealed in relation to those two points.

Interview results

Concerning Item 3, whether or not a good material should look nice, the use of color on both the cover and the inside of the book was reported as being attractive and appealing. For example, one interviewee stated, “if it’s only black and white or something…then I lose my interest in the book or material”. Another mentioned choosing books (graded readers for speed reading practice) based upon the attractiveness of colors and pictures. Colorful text inside the book was judged to be easy to understand by another. Preferable content included text presented in pictorial form (charts and diagrams), and with clear and understandable language, possibly with some reverse-translated explanation. For example, one stated, “graphics or pictures will give me information or important things. Because only long sentences are difficult to understand or I can’t image what does the textbook mean”. Interestingly, a few students noted that ‘looking nice’ could mean portability; many students at the university commute long distances and use their commuting time for studying and desire materials that are easy to handle.

Regarding Item 8, whether or not a good material should tell how to learn best, interviewees struggled to provide examples/meanings of materials demonstrating the aspect of learning how to learn. After several turns of probing between the interviewees and interviewers, five features of such materials emerged. First, providing learning strategies was pointed out as important. For instance, one interviewee stated, taking an example of a TOEFL test book, “…so firstly I need to learn vocabulary but and secondly I have to practice a lot…just practicing and reviewing…like that…”. Second, materials having an easy-to- navigate format was considered helpful. One mentioned, “…in my favorite books there are one picture and under the picture there are many important words are written in the list…”. Third, showing rankings of essential contents was described preferred. One stated, “…it had ABC ranking like A is really important, you need to learn this stuff…”. Forth, introduction of how to study with the material was found useful. One found the feature necessary, stating, “…on the first page it shows me how I am supposed to learn from the book”. Lastly, navigating which chapter to start was claimed important. One stated, “…if they put like, ‘first, I have to do this, then I need to do this’, if a book directs me to study, that’s good”. The next section discusses what the results obtained from the quantitative and qualitative analysis will inform us with respect to the categories that should remain, be removed, or be modified in the checklist.

Discussion

The result that the majority of responses (six out of eight total items) showing similar distribution may suggest that there is a high probability that we can use Reinders and Lewis’ checklist as a basis. It also suggests that the other criteria (except visual presentation and learning to learn) could remain, but the two points need to be reconsidered in developing our checklist. Meanwhile, the insights gained from the interviews as well as predicted gaps between the two sample groups (e.g. linguistic and cultural background, the time gap of ten years between the two studies) should be taken into consideration.

Pertaining to the look of the materials (Item 3), we contend that the criteria of visual presentation of materials should be added to our checklist (Table 8). The interviews revealed that “Look nice” means effective color and picture usage (including diagrams and charts), which, for the learners studied could be aligned with the idea that their culture reflects an acute sensitivity to the look of things (Inouye, 2016). Likewise, as Hill (2013) suggests, students today may prefer elements like color, pictures and text layouts because they are constantly exposed to visual imagery and that therefore may feel more comfortable seeing colorful images and graphics. Thus, a new category was incorporated as shown in Table 8.

Table 8

A Newly Added Category Related to Visual Stimulation (Responding to Item 3)

Additionally, we would like to note that, although this issue appeared just within interviews with a few interviewees, compactness emerged as an element of “Look nice’. Further research on the point of this inquiry would clarify needs of learners of recent date. Compactness could be included into a checklist if the trend proves to be significant.

In relation to Item 8, “Tells me how to learn best”, the survey results show that our target learners place significantly less importance on this factor. This indicates the possibility that we need to carefully deliberate what features to include in relation to “Learning to learn” in our checklist. In the interviews, interviewees struggled to provide their understanding of the meaning of “Learn how to learn” and their interpretation largely differed. This suggests that Item 8 should be rephrased or given examples in the questionnaire so that respondents will better understand its intended meaning. It should be also noted that all of the respondents happened to have marked important or very important to Item 8 in the survey. We assume, based on Sakai, Takagi, and Chu (2010) that respondents who marked less importance to Item 8 might have less awareness toward autonomous ways of learning with learning materials. Sakai, Takagi, and Chu note that “educational and behavioral norms in Japan simply discourage their autonomy” (p. 12). If Japanese learners do indeed tend to have a lesser degree of autonomy, it explains why the interviewees struggled to provide their interpretation of “Learning how to learn”. This could mean that more guided support for autonomous learning strategies for using materials are called for. The preferences that the interviewees expressed after long probing processes, such as showing the most essential content and learning strategies, were incorporated into our checklist. Thus, these new points were added as sub-categories (Table 9). Whereas ELSAC’s checklist has a subcategory of “Shows how to set goals”, we eliminated it because none of our interviewees mentioned this. Setting goals in learning English with materials might be too higher-order thinking for our target learners. That is to say, they may not have yet reached that stage in their journey of becoming autonomous learners. We hope that additional activities to facilitate engagement with learning materials should be incorporated more prominently into a SAC system. Tomlinson (2010) stresses that educators should mediate materials and learners so that self-access materials have learners experience the language in use. We are convinced that SALC facilitators should assist learners to foster their skills to use materials more autonomously. The new added features should certainly be repeatedly trialed and tested for inter-rater reliability and practicality. We expect that such a process will happen after this study.

Table 9

Modified Features of Learning to Learn (Responding to Item 8)

There is no doubt that the present study includes limitations. First, in comparison to the potential body of target users, the number of the survey responses was small. The questionnaire should have incorporated “others” so that respondents could have indicated their own individual ideas. This could collect a wider range of unique preferences rather than solely counting on interview data obtained from a small number of participants. Follow-up interviews were conducted so that we could gather deeper accounts on the features that marked distinct distributions. Whereas this time a small number of interviewees were randomly selected and asked about certain features, more systematic sampling should have taken place. While the present study is limited its scope to developing a pre-evaluation tool for a large number of materials, further research should explore the phases of how whilst- and post-evaluation, which aim to evaluate a limited number of materials, can be systematically implemented in SACs. Such investigation will add more precise and reliable information about learners’ preferences for learning materials.

Conclusions

The present study illustrated one attempt of adapting a SAC materials evaluation instrument originally developed more than a decade ago in New Zealand to a current Japanese EFL context. The results of analysis suggest that the instrument could be a sound basis for us to develop our context specific evaluation checklist. In other words, it was revealed that materials providing clear instructions, the language level, a lot of practice as well as examples, feedback (e.g., answers), easy navigation, and visual representation of materials (e.g., color and images) are considered to be valued features of good SAC materials for Japanese EFL learners. Also, it was hinted that they lack knowledge or training of how to use learning materials autonomously, and therefore an evaluative instrument with respect to learning how to learn may require different evaluation sub-categories, such as showing the most essential contents and learning strategies. Further investigation is also required regarding the point that they are likely to prefer easily carried materials.

Despite the limitations, we suppose that this study contributed to current scholarship in that it presented a procedure of adapting an evaluation checklist to another context. The present study also suggested preferred features for SAC materials that may be unique to Japanese EFL learners. These features will allow SAC staff in Japan to make better decisions about what materials are purchased and recommended to their learners. A further direction of this study will be the proposed checkpoints being further tested and negotiated so as to make the checklist more rigorous and reliable. It is hoped that the proposed procedure will encourage SAC facilitators elsewhere to develop their own context-specific evaluation checklists.

Notes on the Contributors

Atsumi Yamaguchi is currently a Learning Advisor at Meijo University and a senior lecturer at Kanda University of International Studies in Japan. Her research interests include learner autonomy, self-access, intercultural pragmatics, and intercultural communication.

Erin Okamoto is an Assistant Professor at Woosong University in Daejeon, South Korea and a former learning advisor/lecturer in the Self-Access Learning Centre at Kanda University of International Studies in Japan.

Neil Curry is a learning advisor in the Self-Access Learning Centre at Kanda University of International Studies in Japan. He has been teaching in Japan for 10 years and his primary interests are in Foreign Language Anxiety and language advising.

Katsuyuki Konno is a Lecturer at Ryukoku University, Japan. His research interests include individual difference variables in language learning, especially learner motivation, and their effects on learning behaviors. He is currently interested in motivational dynamics during language learning tasks and activities.

Acknowledgments

The authors are very thankful to Akiyuki Sakai for his contribution in obtaining survey data. They also express special thanks to reviewers who provided us with insightful feedback.

References

Benson, P. (2011). Teaching and researching: Autonomy in language learning. Harlow, UK: Pearson.

Creswell, J. W. (2015). A concise introduction to mixed methods research. Thousand Oaks, CA: Sage.

Edlin, C., & Bonner, E. (2018). Augmented reality and crowdsourced feedback to help learners navigate self-access materials. Relay Journal, 1(2) 429-440. Retrieved from https://kuis.kandagaigo.ac.jp/relayjournal/issues/sep18/

Gardner, D. (1999). The evaluation of self-access centers. In B. Morrison (Ed.), Experiments and evaluation in self-access language learning (pp. 111-122). Hong Kong: The Hong Kong Association for Self-Access Learning and Development.

Gardner, D., & Miller, L. (1999). Establishing self-access: From theory to practice. Cambridge, NY: Cambridge University Press.

Hill, J. A. (2013). The visual elements in EFL coursebooks. In B. Tomlinson (Ed.), Developing materials for language teaching (pp. 157-166). London, UK: Bloomsbury.

Inouye, C. S. (2016). Japanese visual culture. Retrieved from http://emerald.tufts.edu/~cinouye/

Jones, F. R. (1993). Beyond the fringe: A framework for assessing teach-yourself materials for ab initio English-speaking learners. System, 21, 453-469. doi:10.1016/0346-251X(93)90057-N

Kato, S., & Mynard, J. (2016). Reflective dialogue: Advising in language learning. New York, NY: Routledge.

Lockwood, F. (1998). The design and production of self-instructional materials. London Sterling, VA: Kogan Page Stylus Pub.

McGrath, I. (2016). Materials evaluation and design for language teaching. Edinburgh, UK: Edinburgh University Press.

Mishan, F., & Timmis, I. (2015). Materials development for TESOL. Edinburgh, UK: Edinburgh University Press.

Mizumoto, A. (2010). A Comparison of statistical tests for a small sample size: Application to corpus linguistics and foreign language education and research. ISM Survey Research Report, 238, 1-14.

Reinders, H., & Cotterall, S. (2001). Language learners learning independently: How autonomous are they? TTWi, 65, 85-96. doi:10.10175/ttwia.65.09rei

Reinders, H., & Lewis, M. (2005). Examining the ‘self’ in self-access materials. rEFLections, 7, 46-53. Retrieved from https://unitec.researchbank.ac.nz/handle/10652/2463

Reinders, H., & Lewis, M. (2006). The development of an evaluative checklist for self-access materials. ELT Journal, 60(2), 272-278. Retrieved from https://unitec.researchbank.ac.nz/handle/10652/2468

Sakai, S., Takagi, A., & Chu, M. (2010). Promoting learner autonomy: Student perceptions of responsibilities in a language classroom in East Asia. Educational Perspectives, 43(1&2), 12-27. Retrieved from https://eric.ed.gov/?id=EJ912111

Sheerin, S. (1989). Self-access. Oxford, UK: Oxford University Press.

Tomlinson, B. (2010). Principles and procedures for self-access materials. Studies in Self-Access Learning Journal, 1(2), 72-86. Retrieved from https://sisaljournal.org/archives/sep10/tomlinson/

Tomlinson, B. (2013a). Developing principled frameworks for materials development. In B. Tomlinson (Ed.), Developing materials for language teaching (pp. 95-118). London, UK: Bloomsbury.

Tomlinson, B. (2013b). Materials evaluation. In B. Tomlinson (Ed.), Developing materials for language teaching (pp. 21-48). London, UK: Bloomsbury.

Appendix

Interview questions

- You said important/very important to Item 3. Why is it important? What kinds of things do attract you?

- If you think of a material that tells you how to learn, can you think of an example?